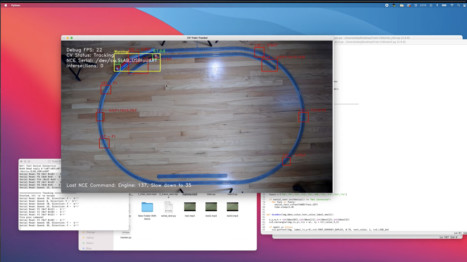

Running a small model train layout with Computer Vision (OpenCV)

Sharing another personal project – this time the objective was to get more comfortable with Python, as it has become so prevalent with ML. I’ve always wonder if it was…

Expanding my Porsche PTS Explorer with Augmented Reality and RealityKit!

This was a demo app I’m building to learn RealityKit — and also improve my SwiftUI and Combine skills too! This demo uses Augmented Reality to visualize automotive paint colors…

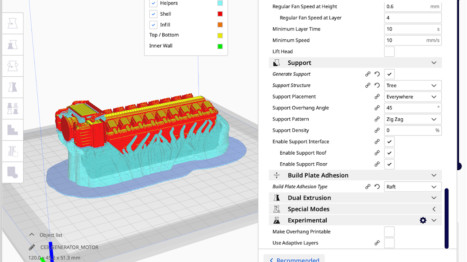

3D Printing, a work in progress.

Just getting started in 3D printing, picked up a Creality Ender3 v2 from Amazon. Using Cura slicer, and Blender for 3D modeling as I already knew it from previous projects.…

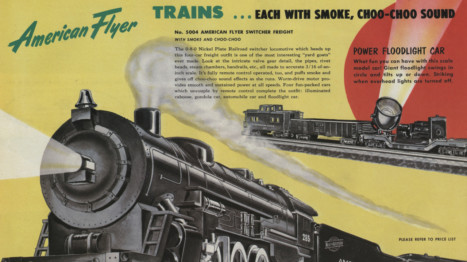

Fixing my Dad’s 1952 American Flyer Train Set

1952 4904T American Flyer Three Car Freight American Flyer Locomotive 282 and Tender, 639 Box Car, 640 Hopper Car, 638 Caboose 75 Watt Transformer, 12 Curve Track, 2 Straight Track,…

Adding real radio chatter to HO Metra via LokSound 5.

I few months ago I had seen a really well done Amtrak P42 (https://youtu.be/4mYZ1AqLjMo?t=244) that had real radio chatter and I thought it added so much depth and realism to…

Silhouettes! Because, why not.

Just wanted to share real quick some drawings I did of my daughters, somehow I got it in my head that I needed their silhouette as art, so this was…

PTS Explorer v2

The next evolution of PTS Explore – I ended up finding a nice GT2RS base model that I created custom materials and lighting for.

Walnut Speaker

Quarantine project: My brother and I each built a custom powered Bluetooth speaker using PDF instructions from https://kmakits.com. The instructions tell you which electronics are needed, and rough lumber dimensions.…

Custom Walnut top for IKEA SKARSTA Desk with magnetic tray.

Some pictures of building a walnut desktop for the IKEA SKARSTA standing desk. https://www.ikea.com/us/en/p/skarsta-desk-sit-stand-white-s59324818/. I was looking for a natural matte/satin finish for the Walnut so I went with Tried…

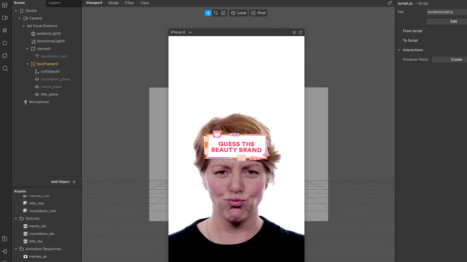

Beauty Brand Game – AR Filters using Spark AR Studio

A fun Instagram filter I helped out on the coding end with. This filter is 100% JavaScript and does not use the patch editor – needed more fine tuned control/logic…